Lots of places don't do agile right, in fact most do it wrong. There are a number of factors why, chief among them I believe is that the percentage of people who have worked somewhere that nailed it completely right is so low that there aren't enough to go around and lead by example. So here are a few of the tips I've been given or learnt over the years, credit has to be given to all the wonderful places I've worked over the years. The ones that have done it well, and the ones that have done it poorly… you can learn just as much from reflecting on where it all went wrong.

Back to basics

Are you already using agile, or are you planning on implementing it in your company? If you're reading this chances are something isn't working as well as you'd like and so it's time to reset. First thing to do is throw out your preconceptions on agile, ignore almost everything you've read in practitioner manuals, because at it's core it's really quite simple. Most problems stem from people picking only certain features of agile or trying to let a tool guide the process for them.

Refocus on the manifesto

It's worth going back and looking at the agile manifesto and then questioning each aspect of your process to ensure it is giving priority to the values on the left side. If it's not, remove it.

Throw out the tools you're using

Scrumworks? Urgh! VersionOne? Atlassian? They're all cut from a similar cloth. If you're not already doing agile correctly, they will just get in the way. So until you're up and running, get them as far away as possible.

For planning, all you need are some index cards and a pen. If that doesn't offer you enough protection for your DRP or wont work because you've got a distributed team then use a wiki. Again, avoid at all costs anything more complicated until you've got it all working.

I mention later in this post that much of this insight on how to do agile properly came from working with Graham Ashton. He's since written an agile planning tool. Unsurprisingly it avoids all the problems I mention above. If you want a web-based tool, check it out.

Planning

The great thing about stripping it back to basics is that it re-aligns focus on the things that are important, you're not distracted about calculating backlog points and predicting velocity. Instead you are collaborating with your customer to understand what they will consider working software.

You need to become the customer

I mentioned in my last post, nobody cares what tools you use, your customer is trusting you to make the right decisions to do the best job. You can only really do that when you understand the requirements completely, a full and deep appreciation for why the work is being done and not simply what needs to be implemented.

Your customer is unlikely to be a software developer so their scope, as broad as it is will be, is limited by their experience. They also can't possibly know upfront all the questions you want answered and all the seemingly irrelevant detail that would feed in to giving the best user experience possible. Expecting them to bridge this gap is completely unrealistic, instead the gap needs to be bridged by having the developers move towards the customer. The developer needs to be completely bought in to the product, understand the problems it solving, and how they'd best be solved.

This is precisely the reason why tools like cucumber don't work, they are bridging that gap from the wrong direction. And if we go back to the agile manifesto, that approach carries the potential to skew the priority back towards contract negotiation rather that customer collaboration.

Customers don't write the stories

And neither do business analysts, development managers, or project managers. I'd go so far to say that if you want to be "agile" and you've got a team of BAs then you should fire them all. Anybody getting between the developer(s) and the customer is stopping the developer from becoming the customer. Crucial detail will be missed and misplaced assumptions will go unchallenged. Sure, you'll still get a product out the door but it will either be what was agreed (which is often different to what is actually wanted) or it will not be as awesome as it could have been.

The people that get between developer and customer have the best of intentions, they're trying to let developers focus on writing code. I'll say it again though, they're job isn't to write code it's to deliver a solution. It's a false economy to think you're saving the developer time by taking on the requirements analysis on their behalf, it will take longer for them to appreciate 3rd hand the requirements than the half day it would take to know them first hand and write them up themselves.

Pull up your sleeves, get out a set of index cards, some pens, and some A4 paper. No laptops, no ipads, no technology. Force the customer to explain the issue without computer aids as though you know nothing. Each of you draw up wireframes, compare the differences between them to see what assumptions have been made, write the card together. Make it a tactile experience.

The result should be a concise description or the business problem, and the end-user benefit you're trying to deliver. Never more than a couple of sentences (maybe a few footnotes for pertinent implementation details that can't be neglected).

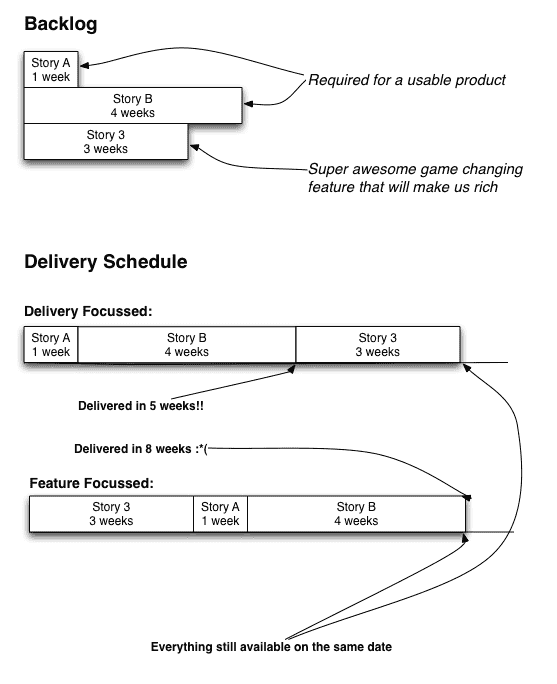

The most important stories get done first

This part of it will be difficult, if not impossible, for the developer to make a judgement call on. It is often very difficult even for the customer, but it has to be done. That being said, it shouldn't be as difficult as many people make it. If you're working on a new app that's not yet been released I've got a diagram to help you evaluate priorities:

It's also worth keeping a focus on getting the smallest usable feature set out the door as soon as possible. Eric Ries said a good way to get to that point is to outline the minimum features you think you need before you can release it publicly, and then you can probably reduce it by 90%. You always need much, much, much less than you ever expect.

If it's an existing app and you're having problems prioritising it might be worth speaking to some users. Just make sure you keep focus on doing the smallest and simplest thing possible to meet the requirement.

A good way to gauge if story is really the next most important thing to get completed is to double the estimate, if that changes the priority in the customer's eyes it wasn't as important as they thought.

If you're not doing the work, you don't get to estimate it

It took me a long time to appreciate this as a manager, I was often guilty of sizing up tasks based on how long I thought it would take me to complete it. Then someone else picks it up and I wonder why it took so long. Truth is it would always take me longer too but that is too easily forgotten, do you really have a load factor of 1? I thought not.

How easy or difficult a task is has so many variables: familiarity with the code base, how recently you've solved a similar problem, access to an existing solution, etc. and each of those come from a fairly personal experience. Sit down, with a pair to rationalise the decision if you like, and come to a conclusion you're happy with. The important thing is that you are consistently optimistic/pessimistic on your approach, it's less important to get the estimate right than it is to be wrong on a relatively consistent basis. Everything averages out very quickly when it comes to the planning.

Don't mention time

Do everything you can to avoid estimating in hours or days. No matter how much you explain things like load factor when you see a story with "2 days" or "8 hours" written next to it people can't help but think that is how long the task will take. So you can't be entirely shocked when 3 days in they ask how that task that was meant to take 2 days is going. Avoid the awkward situations completely by not setting the expectation in the first place.

Estimate in points, or jelly beans, or anything other than hours or something that can be tracked by a watch or calendar.

Estimates aren't guesses, but they're not accurate either

Don't take a look at a story, close your eyes, and come up with a number. Take the time to analyse in detail what is required, what systems you'll need to talk to, read the 3rd party API/integration docs. Spend a whole day or more if you have to and write up a detailed list of tasks in the order you expect to tackle them, including any decisions on implementation you may come to, and put them at the bottom of your story card.

Time invested here pays for itself two-fold later in the iteration by maintaining focus on the minimum set of tasks that need to be completed and setting your direction each step of the way. Plus it gives you confidence that the task is achievable and unlikely to blowout.

When it comes to putting a number (points, jelly bean, whatever) on an estimate I now always advocate a 3 point system. 1 point roughly equates to a half day of effort (ssshhh, don't tell the customer ;), 2 points a whole day, 3 points 2 days. The reason being that it's too difficult to consistently estimate small tasks, if you estimate an hour for something and you get snagged and it takes you the first half of a day (not unusual) you've blown the estimate by 400%. At the other end of the spectrum you've got two problems, either a story that isn't really implementing the most simple solution possible or a broad scope that it's difficult to really sit down and estimate in the detail required to be confident in it.

If the tasks are obviously much smaller than 1 point, I'll try and group a couple of similar smaller tasks into a single 1 point task. If a task is bigger than 3 points, I'll split it into separate deliverable parts. "But it's really a 5 point task, everything has to be delivered at once!", Bollocks!

Estimates for work that isn't scheduled are worthless

They might be useful for getting a high-level idea of when some story might be delivered, maybe, if it's ever actually scheduled. Remember one of the core tenements of agile is responding to change, and nothing impacts priorities like actually giving software to users. So it's not uncommon for that really-urgent-we've-gotta-have-it next feature to get bumped for a glaring hole a bunch of users pointed out. And then bumped again for something else. It comes back to expectation management again, and putting an estimate next to it adds a misplaced finality to where the story sits in the priority queue.

The other issue is that each iteration of development feeds back into next. Some previously completed work may make a later story easier to implement, or it may actually highlight that something is more difficult than originally expected. Either way there are enough factors that can completely invalidate whatever estimates you come up with.

Execution

Once the planning is done, it is down to actually starting the work. It's not always a matter of ticking boxes off the work list though, the most effective teams I've worked in have shared some common traits.

Start mid-week

Few people love a Monday morning and getting motivated can sometimes be an issue. If you compound that difficulty by expecting everyone to be in the right mindset to sit down and write stories, well it doesn't always work out for the best. There is no real long-term cost associated with moving the start of the iterations from a Monday to a Wednesday, but allowing me to be productive first thing after a hazy weekend by allowing me to look at a list of work and just crack on with it is a huge win.

Have a fixed iteration length

I prefer to work with 2 week iterations, 1 week feels too short and 4 too long.

There is a comfort that comes from having some order to the world, and the importance of the general psyche and morale of a team is often underestimated. On those times that a story does take longer than expected, it's nice to know that you've still got a week or so to make it up. It's also great to have a regular sense of completion in predictable intervals, the dreaded risk of being stuck on the same task that is going to take months never materialises (partly because you never schedule a story with more than 2 days of effort, right?). There is a lot of subtlety in the impact this predictability has on developers which makes it hard to pinpoint all the benefits, but when combined they all make for a happier environment.

From a management perspective, it's great to have some clarity on when things are going to be delivered. And I'm not just talking about for the next fortnight, but you know on a specific day each and every fortnight something is going to be delivered. So does the rest of the team. It makes planning much easier, people know the most convenient windows to take holidays, and which days to stay home are particularly inconvenient because you're planning with the customer.

Developer attention is a premium

Jason Fried from 37signals has a great video explaining why you can't work at work that I'd recommend watching. When you're deep in the coding zone nothing is more disrupting to your flow than someone coming and interrupting you to ask you for opinion on something completely unrelated. That 10 minute interruption can take 30 minutes to recover from fully to get you back into the same state. If they happen 2-3 times a day that is up to 25% of the workday productivity lost to distractions.

And as Jason mentions in the video the very action is basically a way of saying "Hey, my immediate needs are more important than whatever you're focussing on, give me attention". It's selfish and unhealthy for productivity. Remove phones. Email people if you have to, but don't expect a response that day. Inter-team or important but non-urgent questions should be posed via an instant messenger, chat program, or something that doesn't demand immediate interruption (like phones and meetings do). What about things that are urgent? Well that needs to be assessed on a case-by-case basis, but the truth is truly urgent things happen very very rarely. Almost everything can wait at least 4 hours.

It's here that a good development manager can work wonderfully, acting as bouncer and preventing direct access to developers while they're busy coding and filtering the urgency of all requests.

Pair Programming

A fairly contentious part of agile is pair programming. When it works well, it's hugely efficient and carries lots of additional benefits (higher quality code, quicker delivery, lower documentation requirements, less "single developer" business risk, etc.) but when it's implemented poorly it's just a waste of a resource. In most places I've worked it sadly falls into the latter camp, and it's because people are just paying lip-service to the practice and working it as mentor/tutor type role rather than a pair actively writing code together.

That's not to say it doesn't also offer great potential as an approach to training, the important thing is both participants need to be as involved and active in writing the code.

Owning the workspace

The biggest problem when it doesn't work is normally down to the setup of the workspace. Generally Person B brings their chair over to share Person A's desk, and Person A stays almost exactly where they were to begin with. It's guaranteed to fail. Here's what you need to do:

-

Share a single monitor, you both need to be looking at the same screen. They're big enough these days that you can see everything you need with appropriate window management.

-

Each person gets their own keyboard and mouse, no co-piloting on shared inputs and it's not fine to have one person with a keyboard and the other person using the laptop it's connected to.

-

Divide the screen in half, then mark that virtual line on the desk with some tape or a marker… right down the desk. Each person gets their side and never should they encroach on the other half.

It might all seem a bit contrived and naff, but it makes a difference to the efficiency of the operation. Body position plays on the sub-conscious and determines whether you feel comfortable using the keyboard in front of you. Sitting too far to the side and you start to feel that you're a spectator on somebody else's computer, too far in front and you feel like you're a pilot and co-pilot rather than peers.

Everyone is in their own context

You're going to know what programs you need open to get the job done. For me it's an IDE, a terminal or 3, and a web browser or the running application. Agree on a common screen layout where everything can be seen at the same time, and then mandate it across the entire team (I've found Divvy really useful to keep it consistent). It means that when you come to someone else's computer it doesn't feel foreign, it's just like being on yours but at a different desk.

Most importantly though is that even though you're both working on the same task together on the same machine, you're probably both in slightly different head spaces and thinking about a slightly different aspect of the problem. Quickly switching between applications to satisfy your own curiosities can have a terrible impact on whatever it was your pair was thinking about. Being able to see everything at the one time prevents you from unexpectedly switching context on each other.

Make sure you get a monitor large enough to make it work. Given you're going to spend ~8 hours each and every day staring at it, it's not sensible to buy a cheap screen.

Silence is golden

This is difficult to nail until you've developed a good working relationship with your pair, but the best communication between the two of you is the non-verbal. For the same reasons above, you can never be sure what the head space of your pair is and interrupting them to mention a typo could completely break their train of thought. Instead wait for an obvious pause or context switch and simply point it out. Eventually it may get to the point where you know each other well enough that even more subtle queues like a shift in posture give away that you need to re-think the code you just wrote. But at least you can then do it once you've got your current thoughts committed to screen.

80 characters is enough for anyone

Like many of the tips on here, they've either been passed on to me by Graham Ashton or refined further by working with him for 3 years. This one seemed particularly pedantic to me, but I went along with it because it really wasn't that difficult to adhere to and he was adamant that he'd accept nothing less. And it's this, no line of code should ever extend beyond 80 characters.

I now try to enforce it wherever I go.

It took me a long time to fully appreciate how important it is, at least a year, but code readability is drastically improved and that is always a good thing. When pairing though, it is mandatory. You don't have the luxury of scrolling indefinitely to the right to read the full line of code to see the intention because doing so will interrupt the flow of your pair. Worse still, it actually takes the bulk of the code off the screen.

It also prevents a nasty scenario where something important that drastically changes the intention of a line of code is cleanly hidden by the window edge and the real flow of the application is the opposite of what you thought was happening. Say something like the following:

def my_example_method

raise "Here is an example of something you would think gets raised!!!" unless coder_reads_this_part?

end

Refactor any line that stretches over 80 characters. It only requires a little thought, makes the intention clearer, ensures you can see all the important code at once, and stops you from having to break someone else's focus.

Test Driven Development

Tests first, then the code. It's not just about ensuring you've got stable and working code, it's about keep focussed on doing the smallest thing possible to make the tests pass.

This approach works great with pair programming, and here's the formula: One person writes a test, the other makes it pass. Once it's passing the person that just wrote the code writes the next test and hands it over. While one of you is trying to write a crafty test to keep the other busy for a while, your pair has already devised a cheeky way to have it always return the value you want and has devised a new test to catch you out.

It's generally a quick back and forth iterative process where you're each trying to outfox the other with edge cases or overly simplistic implementations that pass because the tests are robust enough. It's almost game-like, and eventually you both come to a stalemate. At that point you sit back and realise the story is complete, and with excellent test coverage.

Don't break the build

A continuous integration server is a must as is some form of reporting of any errors raised in production, and problems on both must be treated with a suitably high priority. Anything that is deemed "flakey" and raises an alert for non-legitimate reasons needs to be fixed immediately. As soon as the team starts to doubt the notifications, legitimate problems start to slip through too. It's a bit like the broken windows theory, if you allow these things to go by without action then it breeds complacency and soon some failing tests become accepted as the norm.

That all means that before any developer commits any code back upstream, they run all the tests… all the time. They are there for a reason, and it's inexcusable to not be running them. The CI box is there as a backstop only.

Deliver at the beginning of the end

If you're starting your iterations every second Wednesday then code needs to be completed at the close of play the Monday immediately prior. That means you've got a full day to deploy the code to production and catch any unexpected errors. The other benefit of the mid-week approach is that on the rare occasions it all goes pear-shaped people are more inclined to stay back a little late and fix it. Trying to keep people back after hours on a Friday isn't just difficult, they've often already mentally checked out and off on their weekend and you run the risk of actually making a bad situation worse.

If it all goes seamlessly and you roll-out and have a day free, fantastic! Time to look at that ever growing list of bugs your users have been sending back that haven't been getting scheduled into an iteration. You can always find small tasks to fill the day that are incredibly useful but not super urgent.

Avoid bringing planning and unscheduled work forward, you'll skew your workload for the next iteration and run the risk of over-stressing developers and/or over committing. The benefit of maybe squeezing in a day of development isn't worth the additional risks.

Review

The code is released, people are using it, and everyone is happy. Now it's time to look back and see how things went, what went really well and what could be improved upon.

Run a retrospective

Before the planning session, get all the team in the room to talk about the good and the bad of the previous iteration. Do it quickly, you should need an absolute max of 30mins but you can probably do it in much less. Get index cards or post-it notes of two different colours, one for "went well" and one for "needs improvement", and everyone has to write at least one thing on a card of each colour. There are no limits to how many things people can list though, allow it as a cathartic forum for all issues to be brought out in the open.

Put all the cards up on a board (grouping similar topics), so everyone can see the balance of opinion on how things went. Ensure everyone understands what all of the cards mean and what they are referring to. Now everyone gets 3 points to spend on the "needs improvement" cards, they can spread the points out across 3 cards or put them all onto a single card (or the obvious option in between). The 3 cards that have the most points the team agrees to make a concerted effort to improve upon during the next iteration.

It's only after a couple of iterations with consistently re-appearing top rated problem that I'd consider going back at looking at some of the tools I mentioned throwing out at the top of this article. And even then, only if you're certain they're the best way to fix the most pressing issue facing your team. If I'm honest, I don't think it's going to come up.

Split partially-completed stories

Occasionally a story only gets partly done, and there is much gnashing of teeth when people have to work out what to do with it. Because you've been taking an iterative test driven development you've got a bunch of code that is written, tested, and passing even though the story is incomplete. Fantastic!

Look at what is left to complete and write up a suitable story to reflect it, then go back and revise the previous card to reflect what was actually done. Now look at the original estimate and try and work out what percentage of the story you've completed, apply the appropriate number of points to each story (keeping in mind that if you're following along with my 3 point/jelly bean system, 3 points = 2 days. So if you've done half you've got 1 day on each or 2 points on each).

That way you get an accurate reflection of both what was completed in the previous iteration and a fair idea of what remains, based on your original estimates (remember, we wanted continual optimism. No revising estimates retrospectively).

Calculate your velocity

Now you know how much work you got completed, you can with a fair degree of confidence predict how much you can do next iteration. After a few iterations, you can look back and calculate the average for the past two or 3 to flatten out any particular fast or slow ones and get a good feel for what is achievable.

Under-promise, over-deliver

No kid likes waking up Christmas morning to find the present they asked Santa for to be nowhere under the tree. Likewise, no customer likes being told you're going to give them something in two weeks and for it to not materialise. So if you're uncertain, you're better off scheduling too little work and then pulling an extra story in later in the iteration than over committing. It's not just about managing customer expectations though, it's about limiting stress on developers by keeping the targets realistic.

Conclusion

These are the things that have contributed to making it work at places I've enjoyed working at. The most important thing is to measure overall success of your process as it's ability to create a happy and productive environment, good things will naturally flow from it. At the very least you should apply the principles of agile to the implementation of the process itself. Nothing is set in stone, pause at regular intervals to review the success, adjust the most important aspects to give people what they want.